Materializing the ghost in the machine

Neuralprosthetic research strengthens virtual hand feedback loop

In the video accompaniment to a research paper, the smile that flashes across a man’s face as he wiggles his virtual fingers for the first time captures the essence of the work being done by Bradley Greger’s team – it has the potential to be life changing for those who have lost a limb.

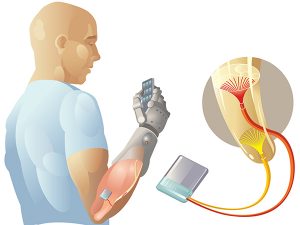

But according to Greger, an associate professor of biomedical engineering in ASU’s Ira A. Fulton Schools of Engineering, having “super amazing” robotic limbs isn’t enough. “The hard part is the interface, getting the prosthetics to talk to the nerves,” he says. “It’s not just telling the fingers to move, the brain has to know the fingers have moved as directed.”

Video: This video serves as supplementary material for a paper published in the Journal of Neural Engineering. The participant is controlling a virtual prosthetic hand by thinking about moving the amputated hand and the nerve signals are recorded by microelectrodes. A computer algorithm decodes the signals and controls the virtual prosthetic hand. Sensations of touch on the amputated hand were also generated in patients by electrically stimulating sensory nerve fibers using an implanted microelectrode array.

Despite the excitement of being able to manipulate virtual fingers, or even fingers attached to a functioning prosthetic device, it is not the same as feeling like the device is part of your own body.

Research by Greger’s team, published in the March issue of the Journal of Neural Engineering, is seeking to establish bidirectional communication between a user and a new prosthetic limb that is capable of controlling more than 20 different movements.

The paper was co-authored by Tyler Davis, Heather Ward, Douglas Hutchinson, David Warren, Kevin O’Neill III, Taylor Scheinblum, Gregory Clark, Richard Normann and Greger, all at the University of Utah. Greger, a neural engineer, joined the School of Biological Health Systems Engineering at ASU three years ago.

In the nervous system there is a “closed loop” of sensation, decision and action. This process is carried out by a variety of sensory and motor neurons, along with interneurons, which enable communication with the central nervous system.

“Imagine the kind of neural computation it takes to perform what most would consider the simple act of typing on your computer,” says Greger. “We’re moving the dial toward that level of control.”

The published study involved implanting an array of 96 electrodes for 30 days into the median and ulnar nerves in the arms of two amputees. The electrodes were stimulated both individually and in groups with varying degrees of amplitude and frequency designed to determine how the participants could perceive the stimulation. Neural activity was recorded during intended movements of the subjects’ phantom fingers and 13 specific movements were decoded as the subjects controlled the individual fingers of a virtual robotic hand.

The motor and sensory information provided by the implanted microelectrode arrays indicate that patients outfitted with a highly dexterous prosthetic limb controlled with a similar, bi-directional, peripheral nerve interface might begin to think of the prosthesis as an extension of themselves rather than a piece of hardware, explained Greger.

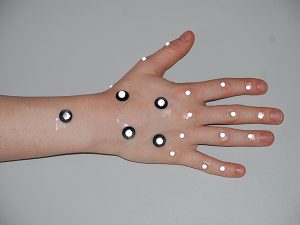

An array of implanted electrodes record neural activity during intended movement of phantom fingers. Image courtesy of Bradley Greger.

Collaboration is key

“We have come a long way in our understanding of the nervous system, but we’ve reached the point where collaboration across disciplines has become essential,” says Greger. “The day of the lone professor making the Nobel Prize-winning discovery is gone. Now scientists, engineers and clinicians are working together as technology advances.” The team assembled for the research reported on in the recent journal publication involved investigators with expertise in neuroscience, bioengineering and surgery.

Access to the wide collaboration available across the Fulton Schools was a key component in Greger’s decision to join the biomedical engineering faculty. “At ASU, there is amazing research happening in the areas of robotics and computer and software engineering,” he says.

Greger cites the achievements of Professor Marco Santello, director of SBHSE, particularly Santello’s “amazing understanding of how the hand works.” In future research, Greger hopes to use SoftHand, which Santello developed with the researchers at the University of Pisa and the Italian Institute of Technology, as the prosthetic hand controlled by neural signals.

“The environment here differs from the classical academic research environment,” he says. “ASU has made structural changes to the university, such as implementing schools that span multiple classical departments, in order to promote interdisciplinary research in the academic community.”

Also important to Greger is the opportunity to work with clinical collaborators at the Barrow Neurological Institute, Phoenix Children’s Hospital and the Mayo Clinic here in the greater Phoenix area. He has several projects underway at BNI and PCH, and has begun working with Shelley Noland, M.D., a Mayo Clinic plastic surgeon who specializes in hand surgery, on continuing the published had prosthesis research.

“One of the most challenging hurdles in moving from research to getting prosthetics into the hands of those who need them is navigating the FDA approval process,” says Greger.

The rigor exercised by the Mayo Clinic in its research initiatives is unparalleled, according to Greger, who describes Mayo as “massively patient-care focused” with rigorous, controlled research parameters. “At Mayo, nothing is haphazard,” he says. “These rigorous standards help with navigating the FDA approval process and facilitate producing research results that can be readily duplicated.”

Virtual Reality in the Neural Engineering Lab

For the next phase of the neuralprosthetic study, markers are applied to the patient’s functioning hand in order to measure hand posture. These measurements are used to position the virtual hand. Photographer: Kevin O’Neill

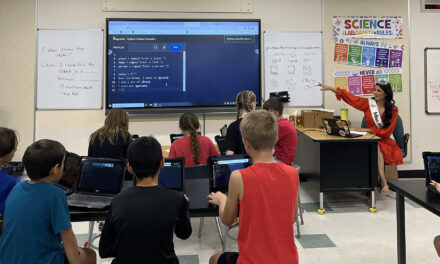

“We’re now at the stage in this process where we ask patients to mirror movements between hands,” explains Greger. “We can’t record what the amputated hand is doing, but we can record what a healthy hand is doing.” So, for instance, asking the patient to wave both hands simultaneously, or to point at an object with both hands, will be integral to the latest tech employed in the feedback loop: an Oculus Rift virtual reality headset.

The advantage of the virtual reality headset is that the patient is able to interact directly with his or her virtual limb rather than by watching it on a screen.

Kevin O’Neill, who was a bioengineer undergraduate on the research team at Utah and now is a doctoral student at ASU, is developing the technology that not only allows the patient to see what his or her virtual limb is doing, but also “decodes” the neural messages that enable the motion to happen.

“At first, when patients are learning to manipulate their virtual hands, they will be asked to strictly mirror movements of a healthy hand,” explained O’Neill. “Once we have learned what information the signals contain, we can build a neural decoding system and have patients drive the virtual representation of a missing limb independently of a healthy hand.”

Denise Oswalt, a bioengineering doctoral student in the Neural Engineering Lab, demonstrates how patients will use the Oculus Rift headset to learn controlled movement of phantom fingers. Photographer: Kevin O’Neill.

For Greger, the most important next steps are getting the technology into human trials and then creating effective limbs that are available to patients at an affordable price.

“There are prosthetic limbs that are amazing, like the DEKA arm or the arm from Johns Hopkins’ Advance Physics Lab. But the costs can be in the hundred thousand dollar range,” he says. “We’re working toward limbs that are accessible both financially and in terms of usability. We want to create limbs that patients will use as true extensions of themselves.”

Media Contact

Terry Grant, [email protected]

480-727-4089

Ira A. Fulton Schools of Engineering