Data DNA to provide security for generative modeling

Above: A team of ASU researchers led by Assistant Professor Yi "Max" Ren is working to create digital DNA for generative models that can be used to create things like deepfake photos and videos. Their work will give attribution to the creators of the models and in instances of malicious activity, will allow for accountability. Image courtesy of Shutterstock

What are the consequences if machines are intelligent but don’t have identities? What are the possible ways to hold machines, and their creators, accountable for their actions taken upon human society?

This is part of the work being explored by Yi “Max” Ren, an assistant professor of aerospace and mechanical engineering in the Ira A. Fulton Schools of Engineering at Arizona State University. Ren and a team of ASU researchers were recently awarded a National Science Foundation grant to study the attribution and secure training of generative models.

Generative models capture distributions of data from a range of high-dimensional, real-world content, such as the cat images that dominate the web, human speeches, driving behavior and material microstructures, among many other types of content. And by doing so, the models gain the ability to synthesize new content similar to the authentic content.

This ability of generative models has inspired many creative ideas that are materialized in the real world, from ultra-high resolution photography to self-driving vehicles and to computational materials discovery. However, the advance of generative models has created two major socio-technical challenges.

First, generative models have been used to create deepfake technology. Accordingly, one is no longer able to completely determine whether an image, a video, an audio recording, or a chat message has been created by a human being or artificial intelligence. In fact, generative models are reported to have been used for espionage operations and malicious personation.

Second, the training of such models may require collaboration among multiple data providers to improve their performance and reduce biases. Such collaboration, however, may expose proprietary datasets (e.g., medical records) and raise privacy concerns.

With the support of the NSF grant, Fulton Schools researchers are working to make generative models more regulated during their dissemination, and more data secure during their creation.

Ren and co-PIs Ni Trieu and Yezhou “YZ” Yang, both assistant professors of computer science and engineering, will address the two open challenges that come with the recent advances and use of generative models for synthesizing media and scientific content.

The three researchers represent a strong interdisciplinary collaboration among computer scientists in artificial intelligence (Yang), security (Trieu) and optimization (Ren). All three have been working on research areas related to this project for years.

“We expect that the outcomes of our study will help evaluate the technical feasibility of legislative instruments that are being developed to tackle the emerging deepfake crisis and concerns about data privacy,” Ren says.

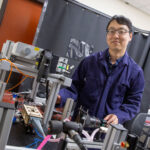

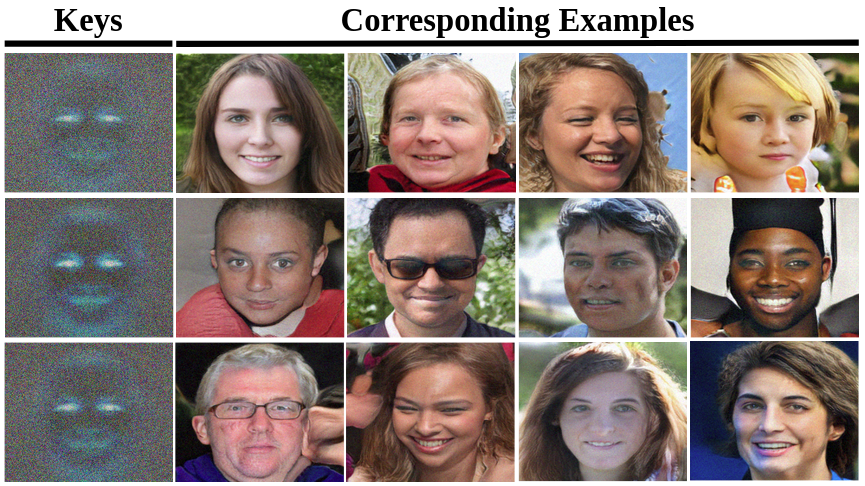

In each row above, the creator’s “key” on the left is included in each of the four images to the right. The imperceptible key, which works similarly to how DNA can identify an individual, allows for the attribution of the creator in the event that one of their images is used maliciously. Image created by Changhoon Kim/ASU

The project will develop new mathematical theories and computational tools to assess the feasibility of two connected solutions to these challenges. If successful, the outcomes of the project will provide technical guidance for the design of future regulation to address the secure development and dissemination of generative models.

The team’s solution to the first challenge is “model attribution.” When an app is developed, unique keys are added by an independent registry, creating a “watermark” on each copy of the app that gets disseminated to the end users. This allows the registry to attribute the owners of generated content.

“Watermarking is a system design problem where we need to find a good balance between three metrics that trade off: attribution accuracy, generation quality and model capacity,” says Ren.

He describes the challenge of creating this balance by envisioning each copy of an app as a dumpling; these dumplings all surround the original authentic dataset.

“For higher attribution accuracy, we would like these dumplings to be apart from each other, which causes either generation quality to drop as they move away from the authentic dataset, or model capacity to drop if we only keep models within a certain distance,” says Ren. “We are trying to improve this tradeoff using the fact that semantic differences are not measured by Euclidean distances.”

As an example, if the AI created a series of faces, having a string of red hair on all the generated faces as an identifying watermark could mean a large deviation from the authentic data in the Euclidean space, but semantically the contents are still of high quality as the faces still would appear nearly the same as one without the watermark.

“This insight may allow us to learn a way of packing many ‘dumplings’, so that they are guaranteed to have no overlap, allowing for high attribution accuracy while remaining semantically valid, and creating high generation quality,” says Ren.

The second challenge involves data privacy and how to ensure the safety of private data when creating generative models.

The research team is studying the secure multi-party training of generative models. Data privacy and training scalability will be balanced through the design of security-friendly model architectures and learning losses.

“We propose the secure generation of keys and the secure training of generative models, in the context where data providers split and encrypt their data across multiple servers on which a computation will be performed,” says Yang. “To this end, we study scalable secure computation algorithms tailored for generative models.”

According to Ren, the scope of this research has the ability to advance into other areas that may be currently viewed as science fiction, but could quickly evolve into reality as watermarks evolve into data “DNA” — working like a genetic key to tell where data originated.

“There will be a need for artificial DNA for androids in the future,” says Ren, who believes androids will form future societies alongside humans. “This topic has been discussed in many sci-fi novels, movies and games. Oftentimes, the fictional discussions involve how adversarial androids may remove their DNA allowing them to attack maliciously, without the humans knowing their origin, and how the society evolves as they respond to those attacks. Our study on deepfakes is among the first to address this issue.”