A soft approach to a hard problem in autonomous vehicles

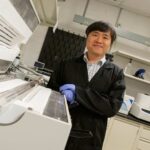

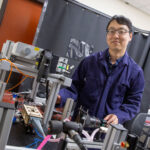

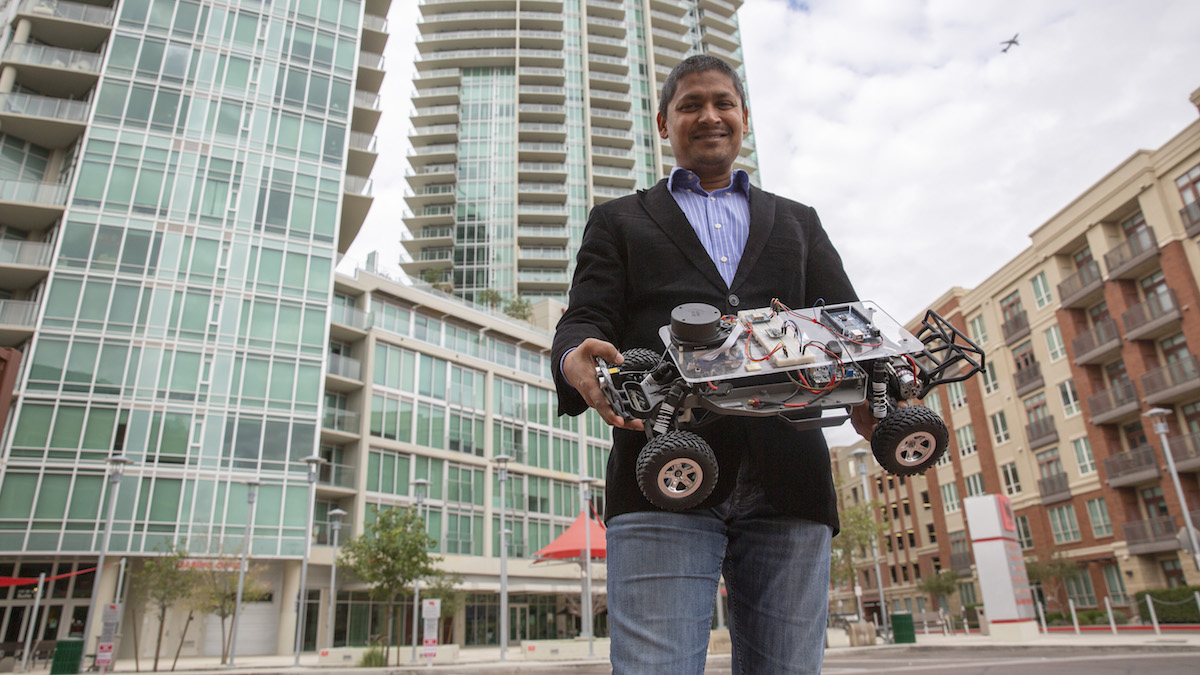

Above: Aviral Shrivastava, an associate professor of computer science and engineering in the Ira A. Fulton Schools of Engineering at Arizona State University, developed software techniques that can help resolve errors in hardware caused by stray cosmic particles. When particularly hard-to-detect hardware errors, called faults, happen in cyber-physical systems such as autonomous vehicles, it’s important to detect and resolve them correctly to prevent property damage and loss of life. Photographer: Erika Gronek/ASU

One guaranteed part of dealing with computer systems is that hardware problems happen.

In a smartphone, hardware errors are inconvenient, but not life-threatening. However, if an autonomous vehicle suffers from a hardware failure, it could be highly dangerous.

As autonomous vehicles are “essentially computers on wheels,” it’s important to know when a hardware error might cause accidents, says Aviral Shrivastava, an associate professor of computer science and engineering in the Ira A. Fulton Schools of Engineering at Arizona State University.

While he’s dealing with hardware problems, Shrivastava says new software strategies are actually the key to powerful and efficient methods to prevent hardware errors in critical systems.

A fault in our cars

Unbeknownst to many, outer space poses a problem for computing systems — even here on Earth and in computer systems like the ones that enable autonomous driving.

Cosmic particles are increasingly affecting integrated circuits — the fundamental components of electronics — as circuits shrink in size and the technology advances. An impact from an invasive particle in just the right spot can cause components and software to behave differently. These are known as “faults” and can vary in severity.

Approximately 90% of faults don’t cause any problems and are known as “masked” faults because another part of the system compensates for the error. These faults are negligible — for example, some might corrupt something in the computer memory that is never accessed again.

Other hardware faults are much easier to spot, as they can cause the software running on the hardware systems to start behaving in an obviously different way or to stop working entirely.

One type of fault called Silent Data Corruption, or SDC, is the “sneakiest” kind of fault because the system appears to be behaving normally, but its results, or output, are slightly wrong. Shrivastava says they’re the most important type of fault to study and understand because they are “hard to even detect.”

SDCs are especially troubling for safety-critical cyber-physical systems, or computing systems, such as autonomous vehicles, that interact with our physical world. It’s important to be able to work around hardware faults, or at the very least be able to detect that something is wrong.

Because they’re hard to detect, it can be difficult to say what errors are caused by SDCs. Some experts believe an SDC potentially caused by cosmic particles may have led to the unintended acceleration problem in Toyota vehicles that led to a massive recall in 2009.

“Reducing the number of SDCs is a meaningful metric for evaluating the effectiveness of a protection technique,” Shrivastava says.

Back in 2015, the common wisdom was that only hardware protection techniques are “strong enough” to protect against hardware errors, and that software techniques are not effective.

But Shrivastava did not buy this argument. He reasoned that if a fault does not rise up to the software level, then it is not important. So any fault that matters (i.e., faults that change the program output) should come to the domain of software for resolution; and, once it’s there, software techniques should be able to detect it and fix it.

“There is no fundamental reason as to why software techniques cannot be effective,” Shrivastava says.

He saw merit in using software techniques for protection, reasoning that even though hardware techniques may be effective, they only work when the hardware is protected.

On the other hand, software techniques are universally applicable. Anyone can use them on any past, present or future processors. They can even be applied in a piece-meal approach to reduce their overhead in energy usage and cost. For example, you can categorically apply software fixes — such as using them only on safety-critical applications, or even to the specific safety-critical parts of an application.

“This ‘flexibility of application’ is not possible for techniques that are already implemented in the hardware,” Shrivastava says. “Once implemented, they always cause overhead.”

Shrivastava made this his research goal — to develop effective software protection techniques — for his National Science Foundation CAREER Award project.

A software touch fixes hardware problems

Shrivastava and his doctoral students, Moslem Didehban and Reiley Jeyapaul then started evaluating the existing software protection techniques, and soon found they were already able to detect 90% of SDC faults. In general, 90% is good, but when human lives are on the line, 90% just isn’t good enough.

After carefully analyzing the weak points of existing techniques, they started to systematically fix the holes.

Over the course of a six-year NSF CAREER Award project, the research team developed a set of software techniques, that are as effective as hardware techniques. They also produced a large body of repeatable evidence to demonstrate their method’s reliability.

“In this project, we were able to develop software techniques that are very effective, and are able to achieve protection comparable to hardware protection techniques,” Shrivastava says.

One method, called near-Zero silent Data Corruption, or nZDC, was published in the 2014 Design Automation Conference proceedings. Shrivastava and Didehban proposed a technique that duplicates program instructions and compares results intermittently to check for errors. nZDC was demonstrated to detect more than 99.9% of the SDCs.

When an error is found, their other technique, Nemesis (described in a 2017 International Conference on Computer-Aided Design paper), runs an underlying cause analysis of the error and finds whether it is even possible to fix the error or not. Nemesis demonstrated an ability to recover from 96% of SDCs. For the remaining 4%, it declared its inability to recover.

While replication is a well-known technique to protect programs, the devil is in the details; most previous works have gotten the details wrong. And even a small change can render the protection ineffective. Shrivastava observed that many previous techniques were sometimes recovering incorrectly. It is also not possible to recover from all errors, and a wrong recovery defeats everything. That is why he inserted a special routine to determine if it is possible to recover correctly.

Shrivastava and his team have a strong publishing record of more than 20 conference papers and 12 journal articles on the topic of software recovery techniques, including Didehban and Jeyapaul’s doctoral dissertations and six other students’ master’s theses.

Their results have the potential to impact how autonomous vehicle systems are certified for reliability. A hardware-based fault monitoring technique is currently the only way to get certified.

“I am of the opinion that effective software techniques should also be allowed,” Shrivastava says.

The meticulous nature of Shrivastava’s six-year software research to find the elusive computing errors with new software techniques has emboldened him to urge the research community to value commitment and perseverance over quick results and ROIs.

“It is because of this impatience that so many ‘ineffective’ protection techniques have been proposed,” Shrivastava says. “Research takes a long time, but carefully considering how faults occur and how to best address them can save lives.”