Potential world-changing impacts of artificial intelligence raise promising possibilities and societal challenges

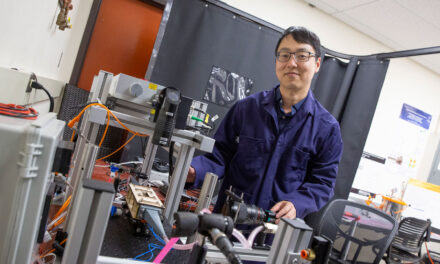

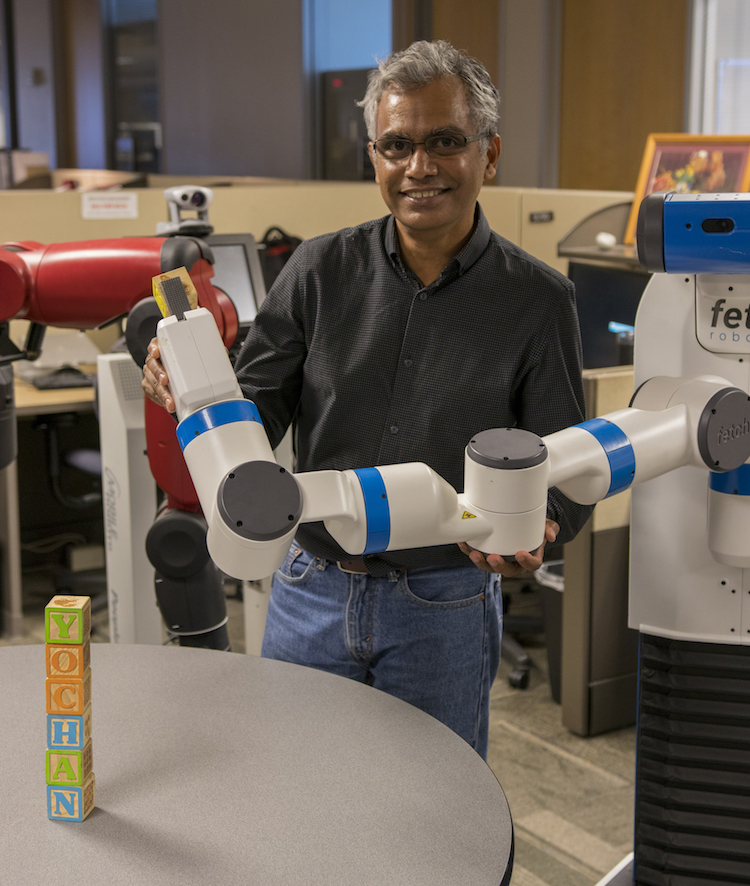

Advances in artificial intelligence technologies are enabling robots to move far beyond merely performing rudimentary repetitive tasks. They can increasingly do complex work that requires keen perception of their environment and interaction with people. Photographer: Marco-Alexis Chaira/ASU

Subbarao Kambhampati is at the midpoint of a two-year term as president of the international Association for the Advancement of Artificial Intelligence, the largest organization of scientists, engineers and others in the field.

The professor of computer science and engineering in Arizona State University’s Ira A. Fulton Schools of Engineering is also on the board of trustees of the Partnership on Artificial Intelligence to Benefit People and Society.

The nonprofit group of representatives from several global technology companies, major universities, prominent foundations and other institutions formed to promote public understanding of artificial intelligence technology and establishment of industry-wide best practices and ethics guidelines.

His roles in those organizations have made Kambhampati one of the go-to sources for news media reporting on issues involving the acceleration of advances in artificial technologies and their potential societal impacts.

He has been doing work in the area — commonly called “AI” — for more than three decades, starting with an undergraduate thesis project in speech-recognition technology.

He’s since done extensive research on automated planning and decision-support systems, and now focuses on developing “human-aware” AI systems to enable people and intelligent machines to work collaboratively.

The following interview is edited from a recent conversation with Kambhampati.

Professor Subbarao Kambhampati with one of the robots used in his lab team’s research aimed at enabling effective collaboration between humans and intelligent robots. The wooden building blocks on the table spell out the name of the lab, Yochan, meaning “thought” or “plan” in the Sanskrit language. Photographer: Marco-Alexis Chaira/ASU

You became president of the AI association at a time when public awareness of these technologies and the issues they raise has exploded. What’s sparking the widespread interest?

AI as a scientific field has actually been around since the 1950s and has made amazing, if fitful, progress in getting machines to show hallmarks of intelligence. The Deep Blue computer’s win over the world chess champion in 1997 was a watershed moment, but even after that, AI remained a staid academic field. Most people didn’t come into direct contact with AI technology until relatively recently.

With the recent advances of AI in perceptual intelligence, we all now have smartphones that can hear and talk back to us, and recognize images. AI is now a very ubiquitous part of our everyday lives, so there’s a visceral understanding of its impact.

Plus, it’s a big driver of major industries, right?

In 2008, for instance, few if any tech companies were mentioning investments and involvement in AI in their annual reports or quarterly earnings reports. Today you’ll find about 300 major companies emphasizing their AI projects or ventures in those reports.

The members of the Partnership for Artificial Intelligence, which I am involved with, include Amazon, Facebook, Google’s Deep Mind, IBM and Microsoft. So, yes, AI is now a very big deal.

The big question about AI is what it means for not only business and the economy, but what it portends for society when AI machines are doing more jobs that people used to do. What’s your perspective on that?

Elon Musk (the prominent engineer, inventor and tech entrepreneur) started this trend of AI fears by remarking that what keeps him up at night is the idea of super-intelligent machines that will become more powerful than humans. Then Stephen Hawking (renowned physicist and cosmologist) chimed in. Statements like that, coming from influential people, of course make the public worry.

I don’t take such a pessimistic view. I think AI is going to do a lot of good things. But it is also going to be a very powerful technology that will shape and change our world. So we should remain vigilant of all the ramifications of this powerful technology, and work to mitigate unintended consequences. Fortunately, this is a goal shared by both AAAI and PAI.

Gary Kasparov, the former chess champion who was defeated by the Deep Blue computer, writes that we should embrace AI, that it will free people from work so that they can develop their intellectual and creative capabilities. Others are saying the same. Do you agree?

I think Kasparov and others who say this are maybe too optimistic. We see from the past that new technology has taken away certain jobs, but also created new kinds of jobs. But it’s not certain that will always be the case with the proliferation of AI.

It seems clear that some professions are going to disappear, and not just blue-collar jobs like trucking, but also high-paying white-collar jobs. There are going to be many fewer radiologists, because machines are already doing a better job of reading X-rays. Machines can also be much faster and better at doing the kind of information gathering and research now done by paralegals, for instance.

This is why we have to start thinking about how society is going to be restructured if AI technologies and systems are doing much of the work that people once did.

What would such a restructuring look like?

This is quite an open question and organizations like AAAI and PAI are trying to get ahead of the curve in answering it. I do want to emphasize that I don’t think it is solely the job of AI experts, or of industry, to think about these issues of long-term restructuring. This is something that society at large has to contend with. We also have to realize that AI consequences play into already existing social ills such as societal biases, wealth concentration and social alienation. We have to work to make sure that AI moderates rather than amplifies these trends.

What can those in the AI field do proactively to produce the most positive outcomes from the expansion of the technology?

We can take potential impacts into consideration when deciding in what directions we want to take our research and development. Much research now, like mine, is focusing on systems that are not intended to replace humans but to augment and enhance what humans are doing. We want to enable humans and machines to work together to do things better than what humans can do alone.

For AI systems to work with humans, they need to acquire emotional and social intelligence, something humans expect from their co-workers. That’s where human-aware AI comes into play.

What keeps you excited about your research?

I’ve always thought that the biggest questions facing our age are about three fundamental things: the origin of the universe, the origin of life and the nature of intelligence.

AI research takes you to the heart of one of them. In developing AI systems, I get a window into the basic nature of intelligence. That’s why I tell my students that it takes a particularly bad teacher to make AI uninteresting.

That is what hooked me into this work. And now I’m getting the opportunity to go beyond the technical aspects of the field and have a voice on issues of ethics and practices and societal outcomes. That is energizing me even more.